Hi there and thanks so much for stopping by!

Hi there and thanks so much for stopping by!My name is Wolf Paulus, I’m a software engineer, innovator and educator based in Sedona, Arizona (consequently a hiker and photographer). This is my journal, where I share thoughts and ideas on technology.

I’m an Assistant Professor of Computer Science at Embry-Riddle. Occasionally, I speak at conferences on topics ranging from Embedded/Mobile Technology to Emotional Prosody and Voice and Conversational User Interfaces.

LATELY / UPCOMING

Many of the new concepts I’m working on, are communicated best through video clips or short films.

Take a look at some short HD films that I have created over the last few months and years.

Take a look at some short HD films that I have created over the last few months and years.

“Amateur Professionalism”, a concept used since 2004, describes an emerging sociological and economic trend of people pursuing amateur activities to professional standards. That pretty much describes, how I look at my photography work today.

If you like, take a look at some of my photos and the stories they tell.

If you like, take a look at some of my photos and the stories they tell.

RECENT POSTS

Could there be an effective computer for students that are REALLY on a budget?

During my final years in high school, I became captivated by the world of computers, succumbing to the allure of programmable devices. The late-afternoon bus rides back to the city, followed by brisk 20-minute walks to the local university, often ...

Installing Linux on Windows (straightforward is was not)

No question, Windows has come a long way. WSL - "Windows Subsystem for Linux" was probably unimaginable while Bill Gates and later Steve Balmer were running Microsoft. However the former Microsoft CEO Steve Ballmer, resigned from the company's board in ...

Azure Serverless with a touch of TDD and CI/CD

AWS has enjoyed a stable market share in the low 30 percent. Google and IBM seem to be on opposing trajectories, and Microsoft is steadily growing its market share, reaching 23% in early 2023. Popular Cloud computing providers include services ...

AI-Tutor helping students to learn Python

[Cover art by Cyberpunk Portrait Generator API] You don't need to be a pessimist to imagine a world where students let AI tools do their homework and teachers use AI tools to evaluate students' submissions. Maybe there is still a ...

GPT – summarizing it cannot.

GPT-3, the third-generation Generative Pre-trained Transformer, is a neural network machine learning model trained using internet data to generate text. More often than not, however, I found that the T(ransformer) in GPT means "Transform into bullshit". Recently, I put the Lenovo ...

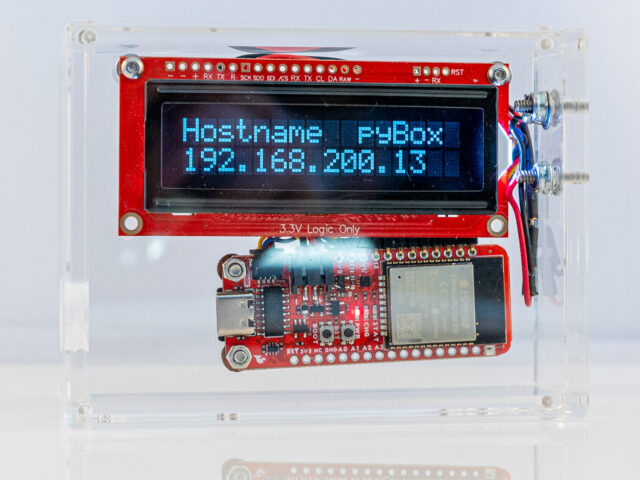

Programming pyBox

Probably the easiest way to develop software for pyBox and iterate reasonably fast, is using the PyCharm IDE with the MicroPython plugin enabled. Create a new project, enable MircoPython and create three files: boot.py, main.py, and app.py. Remember, during the ...

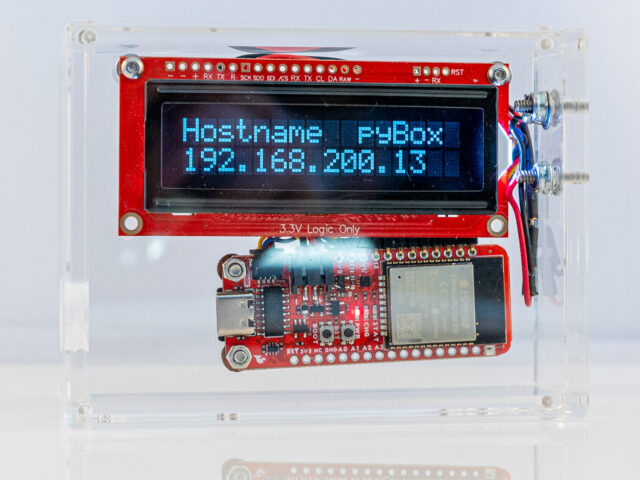

Porting MicroPython to pyBox

The standard ESP32 MicroPython port gets built for a generic ESP32 board. Compared to a generic board, pyBox has more to offer, most of which is not supported out of the box. Generic ESP32 pyBox 2GB Flash Memory 16GB Flash ...

pyBox Specification

Smallest viable MicroPython Computer? Dallas Semiconductor, acquired by Maxim Integrated in 2002, was a company that designed and manufactured analog, digital, and mixed-signal semiconductors. They had also designed the TINI board, a 68-pin SIMM, approximately 103 mm wide, 32mm tall, and ...

Installing Java on macOS 13 Ventura

For some time now, Java is not (pre-)installed anymore, let’s fix that. As I'm writing this, Java 19.0.1 is the latest version and Adoptium is one of the best places to find Prebuilt OpenJDK Binaries. Adoptium was known as AdoptOpenJDK, before the project was ...

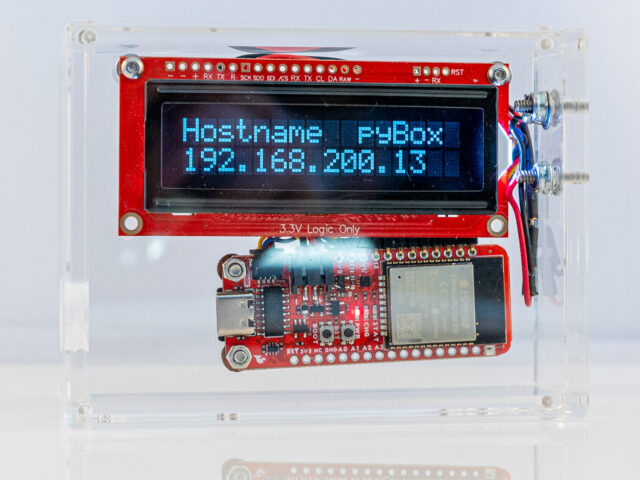

Introducing pyBox

Could there be a computer that is as nimble as it is useful, and still be fun and distinctly easy to program? pyBox, a small single-board computer, connected to a frugal 16x2 character display may be just that. pyBox is ...

Micro Python on ESP8266 (HUZZAH ESP8266)

The ESP8266 is a low cost 80 MHz microcontroller with a full WiFi support. It can be found on several breakout boards, with Adafruit's HUZZAH ESP8266 being one of the better ones. For about $10 you can own a small ...

Installing Tomcat on macOS 12 Monterey

The Servlet 5.0 specification is out and Tomcat 10.0.x does support it. Time to dive into Tomcat 10. Prerequisite: Java Tomcat 10 requires Java version 8 or later and since OS X 10.7 Java is not (pre-)installed anymore. Let’s fix that ...

Onward to conversational applications

About 37%, or in numbers, 95 million U.S. adults have smart speakers in their homes. Half of them are daily active users. However, in the last two years, user growth has only been at 4%. (Smart Speaker Consumer Adoption Report ...

Installing Java on macOS 12 Monterey

For some time now, Java is not (pre-)installed anymore, let’s fix that. As I'm writing this, Java 17.0.3 is the latest LTS (Long Term Support) version and Adoptium is one of the best places to find Prebuilt OpenJDK Binaries. Adoptium was known as AdoptOpenJDK, ...